I’ve been using the ASafaWeb security analyser to scan various websites that I work on. It picks up basic configuration problems in .NET websites and, very handily, will scan periodically to make sure that misconfiguration doesn’t creep back in.

ASafaWeb was created by Troy Hunt, a software architect and Microsoft MVP for Developer Security based in Australia. Troy writes some great articles on improving application security, with a focus on .NET, and he links to those articles in ASafaWeb to illustrate why and how improvements should and can be made.

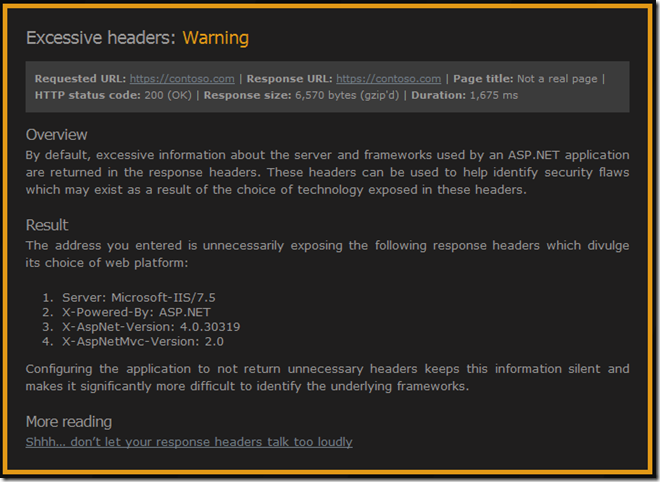

Most of the issues can be addressed in the application, generally in web.config, but the issue I’m interested in here can be solved by configuring at the server level. Here’s the output from ASafaWeb:

See that “X-Powered-By: ASP.NET” header? That one’s inherited from the IIS root configuration. We could remove it in every web.config but better to remove it once.

Troy links to his blog post on removing all of those headers – Shhh… don’t let your response headers talk too loudly. As he mentions, there are many different ways of removing those headers – he goes into IIS Manager UI and removes it from there.

I’m trying to make sure that I script server configuration changes. This is a great way of documenting changes and allows new servers to be configured simply. I want servers that can rise like a phoenix from the metaphorical ashes of a new virtual machine rather than having fragile snowflake that is impossible to reproduce (thanks to Martin Fowler and his ThoughtWorks colleagues for the imagery).

So, the script to remove the “X-Powered-By” header turns out to be very straightforward once you figure out the correct incantations. This assumes you have Powershell and the Web Server (IIS) Administration Cmdlets installed.

Import-Module WebAdministration

Clear-WebConfiguration "/system.webServer/httpProtocol/customHeaders/add[@name='X-Powered-By']"

That’s a lot of words for a very little configuration change but I wanted to talk about ASafaWeb and those ThoughtWorks concepts of server configuration. I also need to mention my mate Dylan Beattie who helped me out without knowing it. How? StackOverflow of course.

Computers can be finickity about time but they’re often not very good at keeping it. My desktop computer, for instance, seems to lose time at a ridiculous rate. Computer hardware has included a variety of devices for measuring the passage of time from Programmable Interval Timers to High Precision Event Timers. Operating systems differ in the way that they use that hardware, perhaps counting ticks with the CPU or by delegating completely to a hardware device. This VMware PDF has some interesting detail.

However it’s done, it doesn’t always work. The hardware is affected by temperature and other environmental factors so we generally synchronise with a more reliable external time source, using NTP. Windows has NTP support built in, the W32Time service, but it only uses a single time server and isn’t intended for accurate time. From this post on high accuracy on the Microsoft Directory Services Team blog,

“W32time was created to assist computers to be equal to or less than five minutes (which is configurable) of each other for authentication purposes based off the Kerberos requirements”

They quote this knowledgebase article:

“We do not guarantee and we do not support the accuracy of the W32Time service between nodes on a network. The W32Time service is not a full-featured NTP solution that meets time-sensitive application needs. The W32Time service is primarily designed to do the following:

- Make the Kerberos version 5 authentication protocol work.

- Provide loose sync time for client computers.”

So, plus or minus five minutes is good enough for Kerberos authentication within a Windows domain but it’s not good enough for me.

NTPD

I’ve been experimenting with the NTPD implementation of the NTP protocol for more accurate time. NTPD synchronises with multiple NTP servers but only synchronises when a majority agree. I’m using the Windows binaries from Meinberg. Meinberg also have a monitoring and configuration application, called NTP Time Server monitor, which is very useful.

The implementation is straightforward, so I won’t go into it in detail. Install the service. Install Monitor. Add some local time servers from pool.ntp.org to your config and away you go.

I do, however, want to describe an issue that we had with synchronisation on our servers. Wanting more accurate time for our application, we installed NTPD and immediately started getting clock drift. Without NTPD running, the clocks were quite stable but with it running, they would drift out by a number of seconds within a few minutes.

A quick Google led me to this FixUnix post and I read this quote from David:

“Do you have the Multi-Media timer option set? It was critical to getting

good stability with my systems”

The link was broken but I eventually found this page which describes the introduction of the –M flag to NTPD for Windows. This runs the MultiMedia timer continuously, instead of allowing it to be enabled occasionally by applications like Flash.

Cutting a long story slightly shorter, it turns out that the –M flag was causing the clock drift on our Windows servers. Removing that option from the command line means that our servers are now happily synchronising with an offset of less than 300ms from the time source.

Tardis

As I mentioned, my desktop computer is very inaccurate. NTPD can’t keep it in check so I’m trying a different approach – Tardis 2000. So far, so good. I’m not sure about the animated GIFs on the website though.

We’ve recently uncovered an issue with the way that I had configured web farm publishing in Microsoft Threat Management Gateway (TMG). When I say “we”, I include Microsoft Support who really got to the bottom of the problem. Of course, they’re in a privileged position as they have access to the source code of the product.

Perhaps I would have resolved it eventually. I’m thankful for MS support though. I didn’t find anything on the web to help me with this problem so, on the off chance it can help someone else, I thought I’d write it up.

The Symptoms

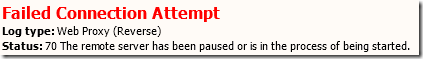

We’ve been switching our web publishing from Windows NLB to TMG web farms for balancing load to our IIS servers and we began seeing an intermittent issue. One minute we were successfully serving pages but the next minute, clients would receive an HTTP 500 error “The remote server has been paused or is in the process of being started” and a Failed Connection Attempt with HTTP Status Code 70 would appear in the TMG logs.

The issue would last for 30 to 60 seconds and then publishing would resume successfully. This would normally indicate that TMG has detected, using connectivity verifiers for the farm, that no servers are available to respond to requests. However, the servers appeared to be fine from the perspective of our monitoring system (behind the firewall) and for clients connecting in a different way (either over a VPN or via a TMG single-server publishing rule).

The (Wrong) Setup

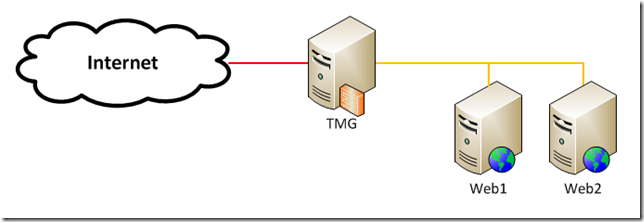

Let’s say we have a pair of web servers, Web1 and Web2, protected from the Internet by TMG.

Each web server has a number of web sites in IIS, each bound to port 80 and a different host header. All of the host headers for a single web server map to the same internal IP address like this:

| Host name |

IP address |

| prod.admin.web1 |

172.16.0.1 |

| prod.cms.web1 |

172.16.0.1 |

| prod.static.web1 |

172.16.0.1 |

| prod.admin.web2 |

172.16.0.2 |

| prod.cms.web1 |

172.16.0.2 |

| prod.static.web1 |

172.16.0.2 |

In reality, you should fully qualify the host name (e.g. prod.admin.web1.examplecorp.local) but I haven’t for this example.

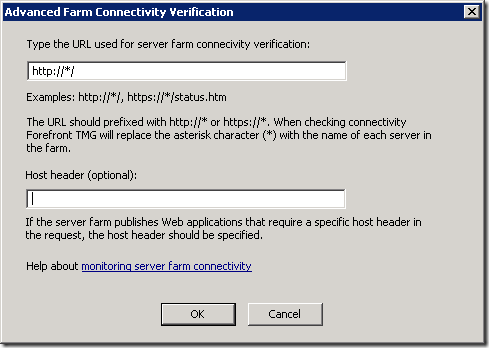

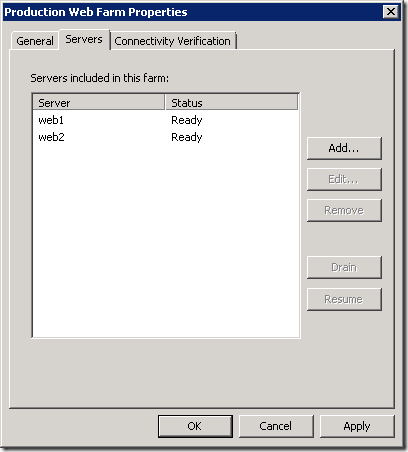

I’ll assume that you know how to publish a web farm using TMG. We have a server farm configured for each web site with each web server configured like this (N.B. this is wrong as we’ll see later):

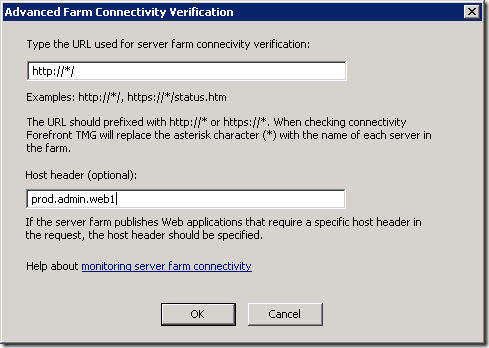

The benefit of this approach is that because we’ve specified the host header (prod.admin.web1) rather than just the server name (web1), we don’t have to specify the host header in the connectivity verifier:

This setup appears to work but under load, and as additional web sites and farm objects are added, our symptoms start to appear.

The Problem

So what was happening? TMG maintains open connections to the web servers which are part of the reverse-proxied requests from clients on the Internet. Despite the fact that all of host headers in the farm objects resolve to the same IP address, TMG compares them based on the host name and therefore they appear to be different. This means that TMG is opening and closing connections more often than it should.

The Solution

The solution is to specify the servers in the server farm object using the server host name and not the host header name. You have to do this for all farm objects that are using the same servers.

You then have to specify the host header in the connectivity verifier:

You could also use the IP address of the server. This is the configuration that Jason Jones recommends but I prefer the clarity of host name over IP address. I’m trusting that DNS will work as it should and won’t add much overhead. If you need support with TMG, Jason is excellent by the way.

Conclusion

Specifying the servers by host header name seemed logical to me. It was explicit and didn’t require that element of configuration to be hidden away in the connectivity verifier.

I switched from host header to IP address as part of testing but it didn’t fix our problem. It didn’t fix the problem because I only used IP addresses for a single farm object and not all of them.

Although TMG could identify open server connections based on IP address, it doesn’t. It uses host name. This has to be taken into account when configuring farm objects. In summary, if you’re using multiple server farm objects for the same servers, make sure you specify the server name consistently. Use IP address or an identical host name.

I was lucky enough to spend a week in the Seychelles this month. If your geography is anything like mine you won’t know that the Seychelles is “an island country spanning an archipelago of 115 islands in the Indian Ocean, some 1,500 kilometres (932 mi) east of mainland Africa, northeast of the island of Madagascar” – thank you Wikipedia.

In our connected world, the Internet even reaches into the Indian Ocean so I wasn’t deprived of email, BBC News, Facebook etc. but I did begin to wonder how such a small and remote island nation is wired up. It turns out that I was online via Cable and Wireless and a local ISP that they own called Atlas.

Being a geek I thought I’d run some tests, starting with SpeedTest. The result was download and upload speeds around 0.6Mbps and a latency of around 700ms. In the UK, the average broadband speed at the end of 2010 was 6.2Mbps and I would expect latency to be less than 50ms. Of course, I went to the Seychelles for the sunshine and not the Internet but I was interested in how things were working.

So, I ran a “trace route” to see how my packets would traverse the globe back to the BBC in Blighty. I thought the Beeb was an appropriate destination.

Then I added some geographic information using MaxMind's GeoIP service and ip2location.com.

The result was this:

| Hop | IP Address | Host Name | Region Name | Country Name | ISP | Latitude | Longitude |

|---|

| 1 |

10.10.10.1 |

|

|

|

|

|

|

| 2 |

41.194.0.82 |

|

Pretoria |

South Africa |

Intelsat GlobalConnex Solutions |

-25.7069 |

28.2294 |

| 3 |

41.223.219.21 |

|

|

Seychelles |

Atlas Seychelles |

-4.5833 |

55.6667 |

| 4 |

41.223.219.13 |

|

|

Seychelles |

Atlas Seychelles |

-4.5833 |

55.6667 |

| 5 |

41.223.219.5 |

|

|

Seychelles |

Atlas Seychelles |

-4.5833 |

55.6667 |

| 6 |

203.99.139.250 |

|

|

Malaysia |

Measat Satellite Systems Sdn Bhd, Cyberjaya, Malay |

2.5 |

112.5 |

| 7 |

121.123.132.1 |

|

Selangor |

Malaysia |

Maxis Communications Bhd |

3.35 |

101.25 |

| 8 |

4.71.134.25 |

so-4-0-0.edge2.losangeles1.level3.net |

|

United States |

Level 3 Communications |

38.9048 |

-77.0354 |

| 9 |

4.69.144.62 |

vlan60.csw1.losangeles1.level3.net |

|

United States |

Level 3 Communications |

38.9048 |

-77.0354 |

| 10 |

4.69.137.37 |

ae-73-73.ebr3.losangeles1.level3.net |

|

United States |

Level 3 Communications |

38.9048 |

-77.0354 |

| 11 |

4.69.132.9 |

ae-3-3.ebr1.sanjose1.level3.net |

|

United States |

Level 3 Communications |

38.9048 |

-77.0354 |

| 12 |

4.69.135.186 |

ae-2-2.ebr2.newyork1.level3.net |

|

United States |

Level 3 Communications |

38.9048 |

-77.0354 |

| 13 |

4.69.148.34 |

ae-62-62.csw1.newyork1.level3.net |

|

United States |

Level 3 Communications |

38.9048 |

-77.0354 |

| 14 |

4.69.134.65 |

ae-61-61.ebr1.newyork1.level3.net |

|

United States |

Level 3 Communications |

38.9048 |

-77.0354 |

| 15 |

4.69.137.73 |

ae-43-43.ebr2.london1.level3.net |

|

United States |

Level 3 Communications |

38.9048 |

-77.0354 |

| 16 |

4.69.153.138 |

ae-58-223.csw2.london1.level3.net |

|

United States |

Level 3 Communications |

38.9048 |

-77.0354 |

| 17 |

4.69.139.100 |

ae-24-52.car3.london1.level3.net |

|

United States |

Level 3 Communications |

38.9048 |

-77.0354 |

| 18 |

195.50.90.190 |

|

London |

United Kingdom |

Level 3 Communications |

51.5002 |

-0.1262 |

| 19 |

212.58.238.169 |

|

Tadworth, Surrey |

United Kingdom |

BBC |

51.2833 |

-0.2333 |

| 20 |

212.58.239.58 |

|

London |

United Kingdom |

BBC |

51.5002 |

-0.1262 |

| 21 |

212.58.251.44 |

|

Tadworth, Surrey |

United Kingdom |

BBC |

51.2833 |

-0.2333 |

| 22 |

212.58.244.69 |

www.bbc.co.uk |

Tadworth, Surrey |

United Kingdom |

BBC |

51.2833 |

-0.2333 |

What does that tell me? Well, I ignored the first hop on the local network and the second looks wrong as we jump to South Africa and back again. The first stop on our journey is Malaysia, 3958 miles away.

We then travel to the west coast of America, Los Angeles. Another 8288 miles.

We wander around California. The latitude/longitude information isn’t that accurate so I’m basing this on the host name but we hop around LA and then to San Jose followed by New York. Only 2462 miles.

We rattle around New York and then around London, 3470 miles across the pond.

Our final destination is Tadworth in Surrey, just outside of London.

That’s just over eighteen thousand miles (and back) in less than a second – not bad, I say.

p.s. don’t worry, I spent most of the time by the pool and not in front of a computer.

I’ve just finished switching my blog from WordPress on TsoHost to BlogEngine.Net on Arvixe. I got an account with Arvixe following a recommendation from a colleague, who also switched from TsoHost.

The reasons for switching? Well, Arvixe allows me to use .NET 4 and SQL Server databases (which I wanted for an Umbraco CMS site that I’m now running) plus I got a promotional rate from Arvixe. Splendid.

Getting BlogEngine.NET up and running was easy but I did go back and forth trying to get the correct application settings for the server. I had a few HTTP 500 errors with nothing else to go on but experience. The Arvixe control panel is a little bit slow, which was frustrating.

The migration was fairly straightforward – I only had a couple of posts to transfer so I did them manually and then tweaked a little. I’d like to add some pictures to the blog theme – it’s a bit too clean at the moment.

Of course, hosts and blog engines and themes are a means to an end – I’m going to try and get some posts up too!

I’m at my first BarCamp this weekend (

BarCamp London 8). I decided to do my "HTML5 basics" presentation again. I think it went quite well – there are a few bits that I could have explained better I think and a projector issue meant that my slides were yellow where they should have been white, but otherwise good. It was a room for 30 and it was standing room only (which is down to interest in HTML5 rather than me of course).

Here are the resources that I pointed to in the presentation. These are some of the best HTML5 resources that I've found so far. Hope you find them as helpful as I have.

We’re half way through Sunday and I can say that BarCamp has been great. The BCL team are quite practiced at conferences now and everything is running smoothly. It’s pretty remarkable for a free conference. Many thanks to everyone involved.

I did a brief presentation entitled "HTML5 basics" at the London .NET User Group Open Mike night on Wednesday. I had a great time!

Open Mike night is intended to introduce new speakers to the community. I've been going to community stuff in London for a couple of years and I thought this was a good opportunity to step up and give something back.

There were four other presentations, which were all excellent:

I gained something watching all of them. I hope others got something from my talk.

Thanks to

Toby for organising the night and to

Michelle and EMC Consulting for organising the venue and drinks.

So, I wanted to post the resources that I pointed to in the presentation. These are some of the best HTML5 resources that I've found so far:

Hope you find them as helpful as I have.

EDIT: Toby's

blogged about the event and upcoming events and one of the attendees has written a

little summary. I'd agree that Sara and Rachel stole the show with their sketch to explain pair programming. I might have to use interpretive dance to describe HTML5 next time!