We’ve recently uncovered an issue with the way that I had configured web farm publishing in Microsoft Threat Management Gateway (TMG). When I say “we”, I include Microsoft Support who really got to the bottom of the problem. Of course, they’re in a privileged position as they have access to the source code of the product.

Perhaps I would have resolved it eventually. I’m thankful for MS support though. I didn’t find anything on the web to help me with this problem so, on the off chance it can help someone else, I thought I’d write it up.

The Symptoms

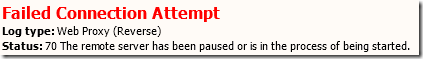

We’ve been switching our web publishing from Windows NLB to TMG web farms for balancing load to our IIS servers and we began seeing an intermittent issue. One minute we were successfully serving pages but the next minute, clients would receive an HTTP 500 error “The remote server has been paused or is in the process of being started” and a Failed Connection Attempt with HTTP Status Code 70 would appear in the TMG logs.

The issue would last for 30 to 60 seconds and then publishing would resume successfully. This would normally indicate that TMG has detected, using connectivity verifiers for the farm, that no servers are available to respond to requests. However, the servers appeared to be fine from the perspective of our monitoring system (behind the firewall) and for clients connecting in a different way (either over a VPN or via a TMG single-server publishing rule).

The (Wrong) Setup

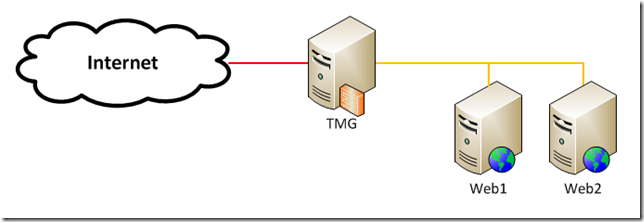

Let’s say we have a pair of web servers, Web1 and Web2, protected from the Internet by TMG.

Each web server has a number of web sites in IIS, each bound to port 80 and a different host header. All of the host headers for a single web server map to the same internal IP address like this:

| Host name |

IP address |

| prod.admin.web1 |

172.16.0.1 |

| prod.cms.web1 |

172.16.0.1 |

| prod.static.web1 |

172.16.0.1 |

| prod.admin.web2 |

172.16.0.2 |

| prod.cms.web1 |

172.16.0.2 |

| prod.static.web1 |

172.16.0.2 |

In reality, you should fully qualify the host name (e.g. prod.admin.web1.examplecorp.local) but I haven’t for this example.

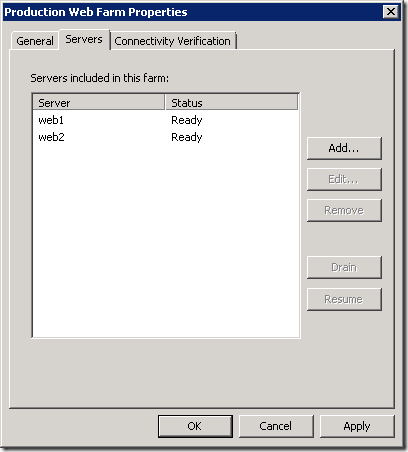

I’ll assume that you know how to publish a web farm using TMG. We have a server farm configured for each web site with each web server configured like this (N.B. this is wrong as we’ll see later):

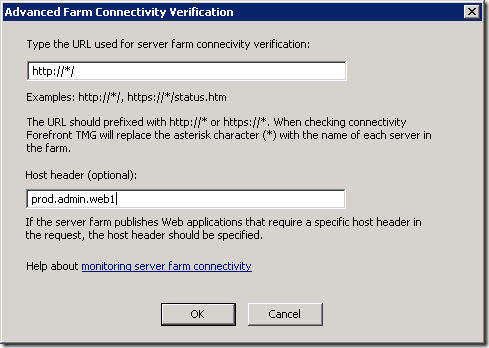

The benefit of this approach is that because we’ve specified the host header (prod.admin.web1) rather than just the server name (web1), we don’t have to specify the host header in the connectivity verifier:

This setup appears to work but under load, and as additional web sites and farm objects are added, our symptoms start to appear.

The Problem

So what was happening? TMG maintains open connections to the web servers which are part of the reverse-proxied requests from clients on the Internet. Despite the fact that all of host headers in the farm objects resolve to the same IP address, TMG compares them based on the host name and therefore they appear to be different. This means that TMG is opening and closing connections more often than it should.

The Solution

The solution is to specify the servers in the server farm object using the server host name and not the host header name. You have to do this for all farm objects that are using the same servers.

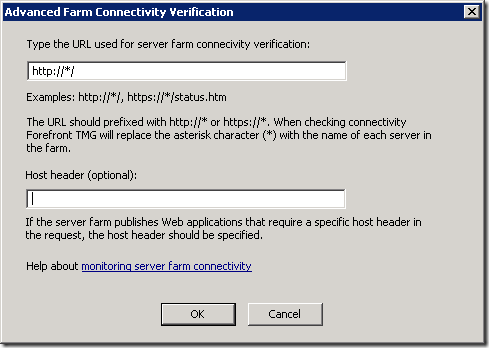

You then have to specify the host header in the connectivity verifier:

You could also use the IP address of the server. This is the configuration that Jason Jones recommends but I prefer the clarity of host name over IP address. I’m trusting that DNS will work as it should and won’t add much overhead. If you need support with TMG, Jason is excellent by the way.

Conclusion

Specifying the servers by host header name seemed logical to me. It was explicit and didn’t require that element of configuration to be hidden away in the connectivity verifier.

I switched from host header to IP address as part of testing but it didn’t fix our problem. It didn’t fix the problem because I only used IP addresses for a single farm object and not all of them.

Although TMG could identify open server connections based on IP address, it doesn’t. It uses host name. This has to be taken into account when configuring farm objects. In summary, if you’re using multiple server farm objects for the same servers, make sure you specify the server name consistently. Use IP address or an identical host name.